Allan Adamson is the name of the Oracle engineer who has commissioned this project. He works on Linux kernel development for this company's distro, and has now gotten an introduction to connecting NVMe flash storage via TCP.

Oracle Linux UEK5 It is the version that NVMe introduced on Fabrics, thus allowing NVMe storage commands to be transferred over networks such as Infiniband or Ethernet using RDMA, both widely used in HPC and data centers. In the UEK5U1 version, this support was extended to also support fiber optic channels.

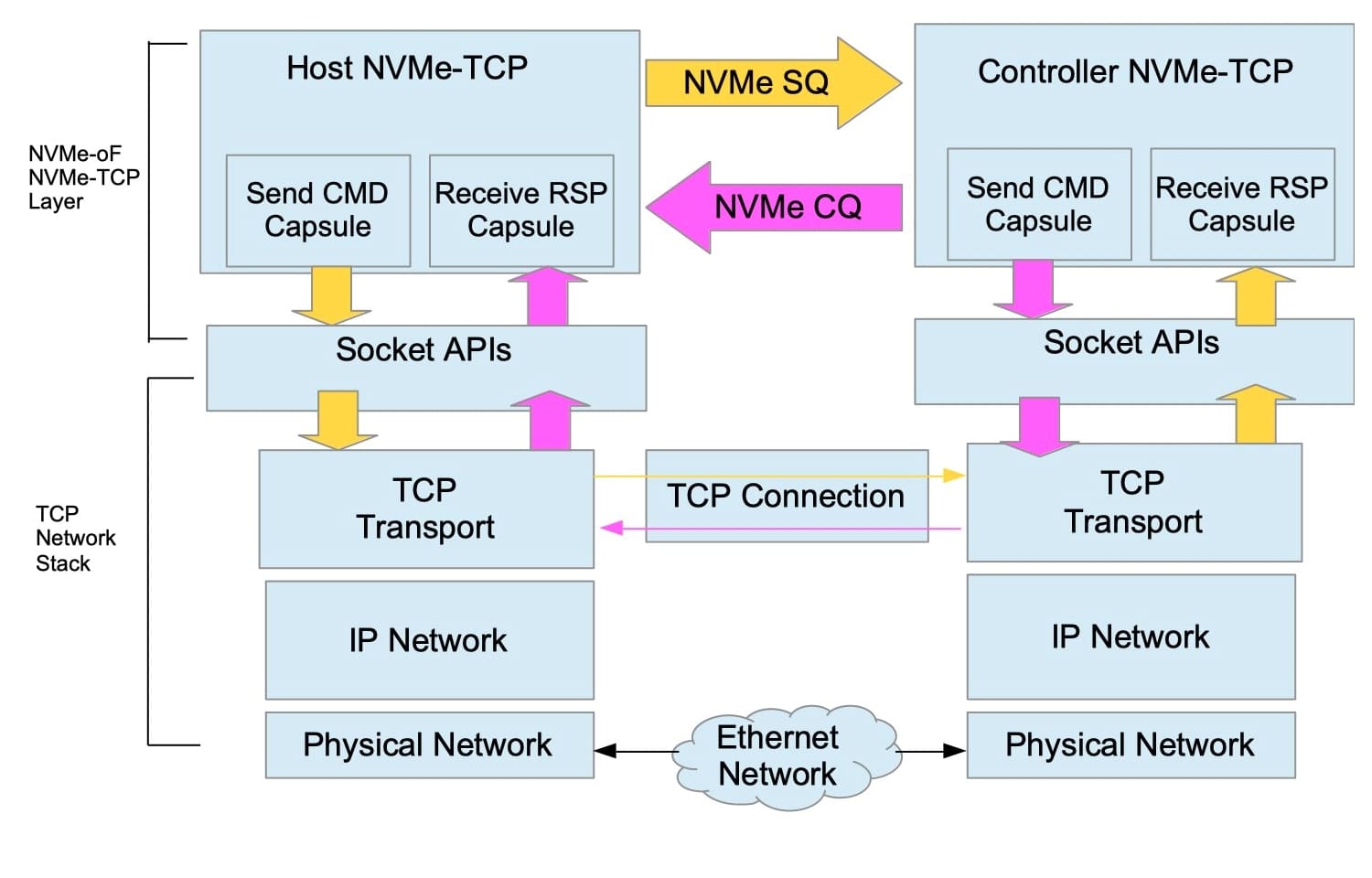

Now in the UEK6 this new NVMe over TCP, which again extends the above to support standard Ethernet without having to purchase RDMA-compliant spatial network hardware.

Now if you're wondering what is that about NVMe over TCPYou should know that NVMe's multi-queuing model implements up to 64.000 I / O send and completion queues, as well as one management send queue and one completion queue within each NVMe controller. For a PCIe-attached NVMe controller, these queues are implemented by host memory and are shared by both the host CPUs and the NVMe controller.

The I / O is sent to a NVMe device when the device driver writes a command to a send queue and then writes a log to notify the device of this event. When the command completes, the device writes to an I / O completion queue and generates an interrupt to notify the device driver that it has completed.

Source: Oracle

With NVMe over Fabrics, this basic scheme for send and finish queues in host memory is extended so that they can also be duplicated in a remote controller, so that a host-based queue pair is mapped to a controller-based queue pair. Something that for a PC is absurd but that for HPC equipment and servers can be very interesting for remote communication between nodes ...

If this discovery translates into more data throughput per second, so be it.