The launch of the project is presented Cilium 1.4, in which, with the participation of Google, Facebook, Netflix and Red Hat, it is developing a system to guarantee network interaction and apply security policies for isolated containers and processes.

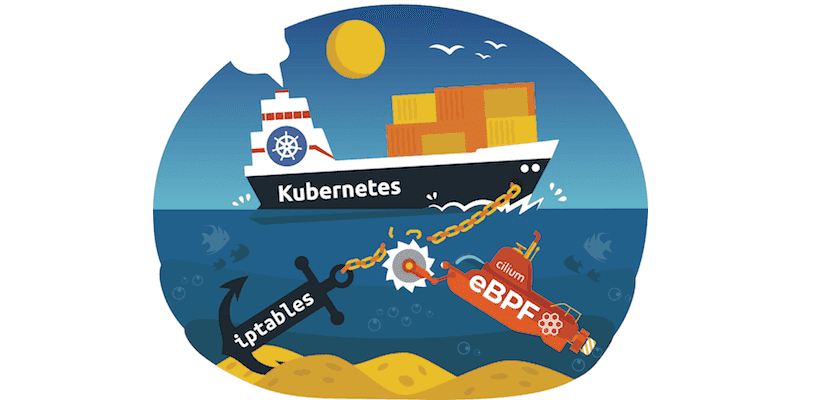

To distinguish between network access in Cilium, eBPF is used (Berkeley Packet Filter) and XDP (eXpress Data Path). The code for the user-level components is written in Go and is distributed under the Apache 2.0 license.

The BPF scripts loaded into the Linux kernel are available under the GPLv2 license.

About Cilium

The basis of Cilium is a background process that works in user space and does the work of generating and compiling BPF programs., as well as interacting with the runtime provided by the containers.

In the form of GMP programs, systems are implemented to ensure the connectivity of the containers, integration with the network subsystem (physical and virtual networks, VXLAN, Geneve) and load balancing.

The background process is complemented with an administration interface, a repository of access rules, a monitoring system and integration modules with support for Kubernetes, Mesos, Istio and Docker.

The performance of a Cilium-based solution with a large number of services and connections is twice ahead of iptables-based solutions due to the high search overhead of the rules.

Main innovations

cilium you have the ability to route and forward service traffic between multiple Kubernetes clusters.

The concept of global services (a variant of Kubernetes full-service services with multi-cluster backends) is also proposed.

As well has tools to set the rules for processing DNS requests and responses along with container groups (pods), allowing you to increase control over external resource usage of containers.

In addition, there is support for logging all DNS requests and responses along with pods. In addition to access rules at the IP address level, now you can determine which DNS queries and DNS responses are valid and which should be blocked.

For example, you can block access to specific domains or allow requests for the local domain only, without the need to track changes in the binding of domains to IP.

This includes the ability to use the returned IP address in the process of a DNS request to restrict subsequent network operations (for example, you can only allow access to IP addresses that were returned during DNS resolution.

Main new features of version 1.4 of Cilium

In the new version Added experimental support for transparent encryption of all traffic between services. Encryption can be used for traffic between different clusters, as well as within the same cluster.

It has also been added the ability to authenticate nodes, allowing the cluster to be placed on an untrusted network.

The new functionality allows, in case of backend failures that ensure the operation of the service in a cluster, automatically redirect the traffic to the processors of this service in another cluster.

Added experimental support for IPVLAN network interfaces, allowing higher performance and lower delays in the interaction between two local containers;

Added a module for Flannel integration, a system to automate the configuration of network interaction between nodes in a Kubernetes cluster, allowing you to work in parallel or run Cilium on top of Flannel (Flannel network interaction, Cilium balancing and access policies).

Experimental assistance has been provided to define access rules based on AWS metadata (Amazon Web Services), such as EC2 tags, security groups, and VPC names.

The opportunity to launch Cilium on GKE (Google Kubernetes Engine on Google Cloud) using COS (Container Optimized Operating System) has also been proposed;

This provides a test opportunity to use Sockmap BPF to speed up communication between local processes (for example, useful for speeding up the interaction between the sidecar proxy and local processes).